Nvidia aims for $1 trillion in revenue next year thanks to its next-generation AI chips, even competing with Intel as it expands into the CPU market.

At Nvidia's biggest annual event, CEO Jensen Huang unveiled a new product line. He predicted that the latest AI chip lineup would help the company achieve $1 trillion in revenue by 2027.

In his 2.5-hour opening speech at the GTC 2026 conference, Huang announced plans to enter the central processing unit (CPU) market, a sector long dominated by Intel.

According to Bloomberg , Nvidia also launched semiconductor products using technology supplied by Groq. The continuous launch of new products shows that the company doesn't want to fall behind in the booming AI market.

Nvidia's efforts

Huang's speech revolved around the central message: the demand for computing power is always increasing, and Nvidia possesses a unique advantage in meeting this trend on a large scale.

"I believe the demand for computing has increased a millionfold in the last two years. That's a feeling we all, all startups, are experiencing," Huang emphasized.

The $1 trillion revenue forecast was driven by orders for the Blackwell chip line and the new Vera Rubin architecture. This is evidence that market demand remains strong, although some investors are beginning to doubt the ability to sustain explosive growth.

Previously, Nvidia projected its data center business would generate $500 billion in revenue by the end of 2026. The latest forecast extends that vision by another year and doubles the value.

The market reacted cautiously. After rising 4.8% early in the session, Nvidia shares narrowed their gains and closed at $183.19 in New York, equivalent to a 1.6% increase.

Nvidia's annual revenue growth rate. Photo: Bloomberg .

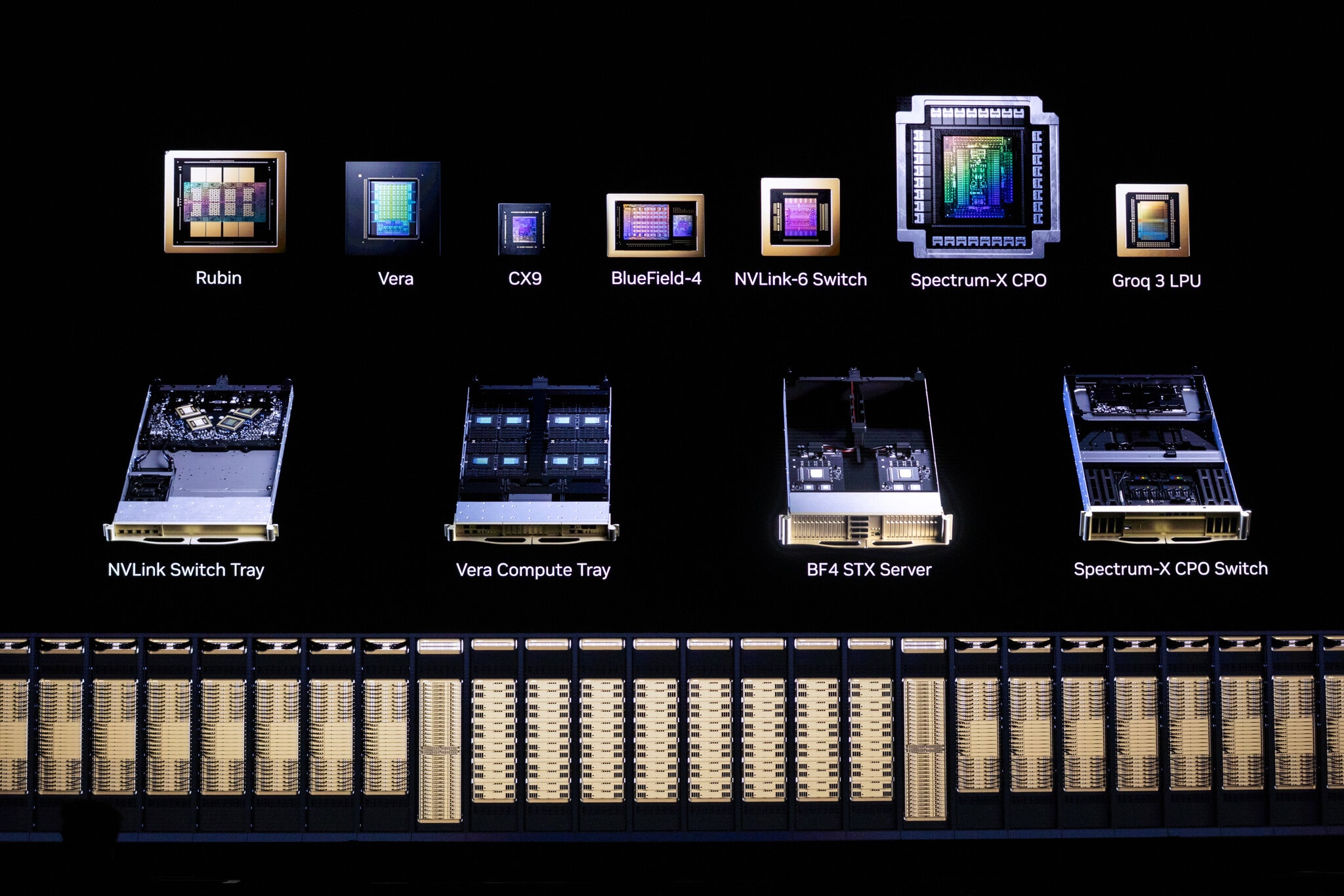

At GTC 2026, Nvidia showcased chips using technology from Groq. The company also presented computers equipped with all-in-one CPUs, promising to create a multi-billion dollar market.

According to Bloomberg , GTC 2026 is Nvidia's latest effort to promote AI technology and retain loyal customers. The company also used the conference to announce partnerships with several major companies, aiming to demonstrate the increasing usefulness of AI.

Nvidia's list of partner companies currently includes IBM, Hewlett Packard Enterprise, and Adobe. They are also strengthening their relationship with Uber, developing a self-driving car system controlled by Nvidia software by 2028.

Nvidia's heavy investment in AI has made it the world's most valuable company. Of course, competitive pressure is always present from rivals like AMD, as well as from customers who want to manufacture their own AI chips to save costs.

Defending the throne

Nvidia has accelerated the development of the technology. The next design of its flagship AI processor, expected to appear in the second half of 2026, is named Vera Rubin, in honor of the pioneering astronomer who discovered evidence of dark matter.

Following Vera Rubin, the next generation of chips is named after Richard Feynman, the American physicist who died in 1988. According to Nvidia, this generation boasts high-bandwidth and customizable memory, although specific specifications have not yet been revealed.

In addition, the Groq 3 language processing unit (LPU) has also been added to Nvidia's product lineup. Short for Language Processing Unit, this is a specialized chip designed to accelerate the inference process of large language models, helping AI respond to commands faster.

The LPU incorporates high-speed memory, enabling near-instantaneous text processing. Nvidia will offer Groq as a secondary processor, used in conjunction with existing accelerators.

Over the past year, AI companies have shifted their focus. AI systems built on Nvidia chips have improved many skills. They can write software code, conduct research, and create images/ videos . This is a result of the inference process.

The reasoning process places higher demands on chips. Hardware now needs to be able to generate data at the lowest possible cost and speed.

The products announced by Nvidia at GTC 2026. Photo: New York Times .

When considering cost and speed, Nvidia chips don't have an advantage. Competitors are pulling ahead, notably Google with its own line of chips equipped with tensor processors (TPUs). Some emerging companies like Cerebras also possess specialized hardware for running AI.

In December 2025, Nvidia announced a $20 billion agreement to use certain technologies and designs from Groq, a company specializing in manufacturing custom chips for inference processes.

Samsung Electronics manufactures Groq chips using the 4nm process. At GTC 2026, the South Korean company also introduced its next-generation high-bandwidth memory chip, HBM4E.

According to the New York Times , Nvidia's continuous release of new chips shows that the AI market is changing very rapidly. The corporation is willing to do everything to maintain its position as the leading chip manufacturer.

In just three years, Nvidia has become a driving force behind the US economy . The company's hardware accounts for over 90% of the AI market. CEO Jensen Huang clearly doesn't want to relinquish this dominant position.

"They will string these technologies together to defend their throne," said Daniel Newman, president of technology analytics firm Futurum Group.

Nvidia's new direction

Nvidia's strategy is yielding positive results. Last month, OpenAI announced an agreement with Nvidia to supply specialized inference chips. However, Newman predicts Nvidia may only capture about one-third of the AI power chip market share, even though the company still holds 90% of the system development chip market share.

Nvidia also confirmed that the Vera Rubin CPU line will offer superior performance compared to its predecessors. The company stated that in the context of increasingly complex AI data centers, coordinating work between computers and software, a task for versatile CPUs, is becoming increasingly important.

Vera Rubin combines the characteristics of CPUs found in data centers, gaming PCs, and laptops. This chip line is capable of processing multiple input streams simultaneously, while still quickly handling complex single tasks. Nvidia also emphasizes that the new chips will consume less power.

Nvidia CEO Jensen Huang at GTC 2026. Photo: Bloomberg .

Notably, Nvidia will be selling computer systems built entirely from CPUs. These devices can operate independently or in combination with other GPU-based systems.

To keep up with the trend of AI agents, systems that can automatically perform many tasks, Nvidia also introduced NemoClaw. This solution helps software companies apply agents for various purposes.

According to Bloomberg , this is Nvidia's attempt to move beyond its core strength of GPUs, which were previously used only for training and running AI software. Now, the company offers complete computing systems with processors, networking, and software.

Nvidia also provides AI models and open-source software, meaning customers can customize the technology to their liking. The company is also willing to customize software for specific purposes, focusing on industries where AI has high application potential.

Nvidia quickly became a leading chip supplier in data centers. However, as software becomes more powerful, many companies are exploring using CPUs to run trained services to save costs and energy. Compared to data centers, CPUs are generally cheaper, more versatile, and consume less power.

Until now, Nvidia has only offered CPUs that are available in other chip types. Widespread CPU sales could pose a significant challenge to Intel, as well as to the internal chip development efforts of Amazon or SoftBank.